Каждый месяц Aviator привлекает десятки миллионов игроков по всему миру! В Авиаторе можно выиграть просто увеличив ставку по коэффициенту х100. Это значит, если вы вложите всего 300 тенге, то получите 30 000 тенге. Но Вы можете получить и 5000 долларов!

Это азартное развлечение нового поколения. Выигрывай в разы больше за секунды! Aviator kz построен на доказуемо честной системе Provably Fair, которая в настоящее время является единственной гарантией честности в игровой индустрии.

Но помните! Если вы не сможете снять свои средства до вылета самолета в Астану или вас застанут врасплох, ваша ставка будет сожжена. Авиатор кз – это азарт, риск, и победа!

Игра Авиатор на деньги в Казахстане признана одной из самых популярных в 2022 и 2023 году по версии онлайн казино на реальные деньги 1win и 1xbet.

| 🎯 Год выпуска | январь 2019 |

| ⭐ Провайдер | Spribe |

| 📄 Лицензия | Spribe OÜ лицензируется и регулируется в Великобритании Комиссией по азартным играм под номером счета 57302 |

| 🔀 Поддержка казахского языка | Да |

| ☝️ RTP | 97% |

| ☝️ Показатель волатильности | Низкий |

| 💲 Минимальная ставка | $0,10 (50 тенге) |

| 💲 Максимальная ставка | $100 (45 000 тенге) |

| 💰 Самый крупный выигрыш за раунд | $10 000 |

| ➕ Коэффициент | х1-х100 |

| 🎫 Валюта счета | USD, EUR, доступны KZT |

| 🎁 Бонусы | Бонусный раунд +15 FS, игра-риск |

| ▶️ Автокешаут | Да |

| 🚀 Демо режим | Да |

| 📣 Технологии | JS, HTML5 |

Суть игры

Авиатор позволяет вам почувствовать себя пилотом, который может поднять самолет на любую высоту. Высота в данном случае равна коэффициенту (множителю), который будет применяться к вашей выигрышной ставке.

Главное не переусердствовать и уметь остановить старт в нужный момент. Проще говоря, нужно вовремя нажать кнопку выкупа до того, как самолет перестанет набирать высоту (множитель перестанет увеличиваться).

Если акция заканчивается до того, как вы обналичите, средства сгорают. Но в том случае, если жадность не берет верх над разумом, и вам достаточно умножить ставку в 2-3 раза, то успех по максимуму гарантирован!

Что важно знать

- Множитель выигрыша начинается с 1x и увеличивается по мере увеличения высоты самолета.

- Ваш выигрыш равен произведению закрытого вами множителя на сумму ставки.

- Перед началом каждого раунда разумный генератор случайных чисел генерирует множитель, с которым летит самолет. Вы можете проверить честность каждого раунда, используя функционал, доступный в игре.

Правила игры

В приложении вы можете играть в свои любимые клубы без ограничений в Казахстане. Игровое приложение открывается мгновенно. Приложения не загружают память устройства, потому что их игра в самолет на деньги меньше 2 МБ. Если вы хотите загрузить и установить приложение, это займет у вас всего несколько минут. Ссылки на скачивание отображаются на официальных сайтах клубов в специальном разделе.

Единственное, что вы видите на экране, это восходящий самолет. Пока не улетит с экрана! Игра Авиатор, на мой взгляд, интересна. Начал играть недавно, есть небольшие выигрыши. Но я очень доволен обоими)

- Также многие готовые стратегии позволят получить уже после первого депозита. Заработать могут даже новички.

- Да, есть несколько тактик, которые помогут заработать на минимальных и максимальных ставках.

- Крупный выигрыш в Авиаторе Казахстан в этом случае невозможен, но небольшие взносы в копилку игрока будут поступать регулярно.

- Пополнить счет можно разными способами (банковская карта, электронные кошельки, криптовалюта, через терминал).

Виртуальный клуб предлагает своим посетителям зарегистрироваться в системе и подтвердить свою личность верификацией. Система позволяет делать ставки в Авиатор без риска и начать выигрывать.

Алгоритм игры

Алгоритм Aviator kz настолько прост, насколько это возможно. Каждый раунд вы делаете ставку. Искусственный интеллект запускает рост умножения. В рандомный (случайный) момент рост коэффициента прекращается, а деньги игроков, не успевших их отыграть в это время, сгорают.

Стоит отметить, что 100% честность и невмешательство онлайн-казино в результаты игры Aviator Спрайб достигается технологией Provably FAIR. Более подробно, результат каждого раунда (множитель, при котором самолет улетел) формируется на серверах виртуального казино.

Его генерация происходит с помощью игроков, которые участвуют в раунде и используют свои стратегии, он полностью прозрачен. Кроме того, каждый может проверить и подтвердить честность площадки.

Основные функции — как играть в Авиатор kz:

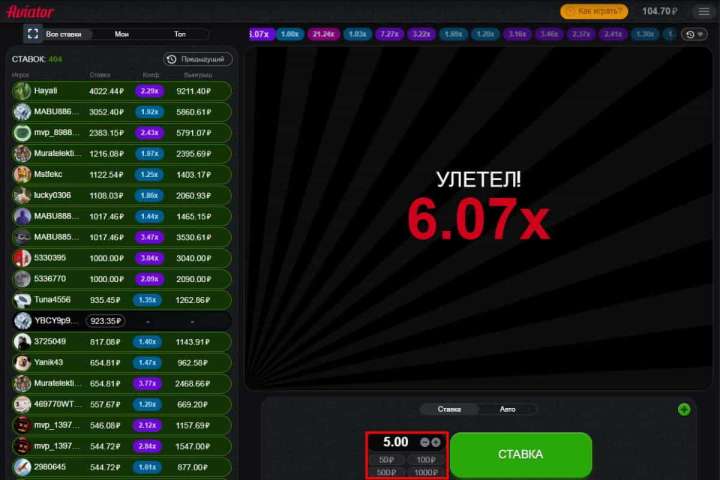

Ставка и Кешаут

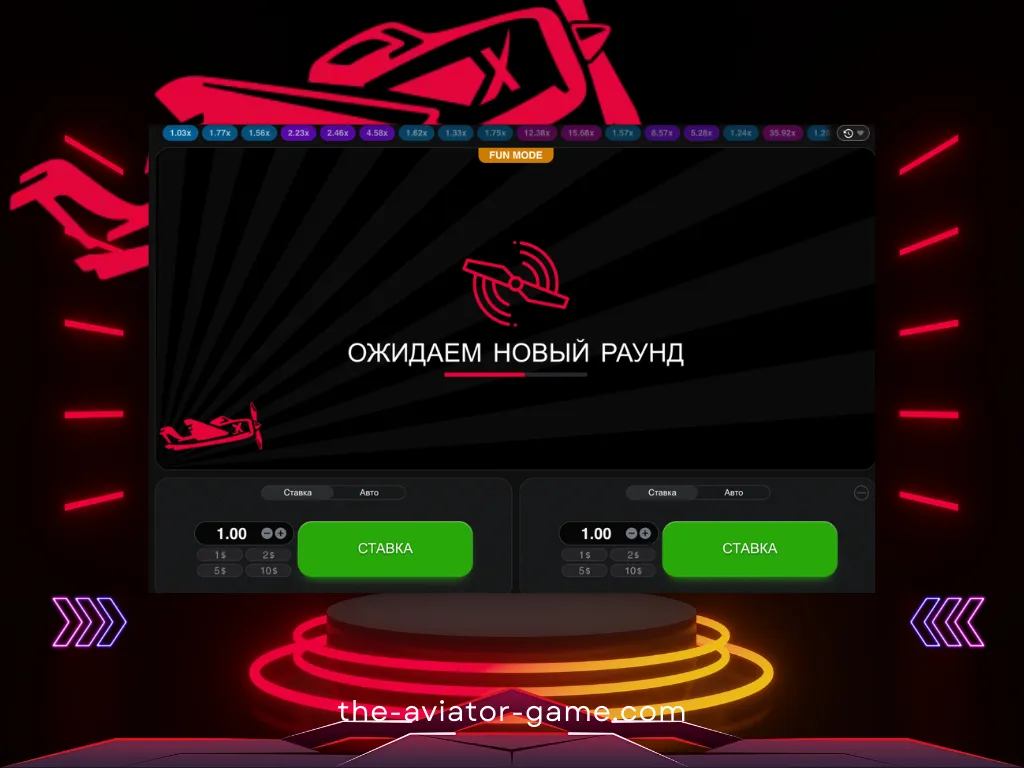

- Чтобы сделать ставку, выберите сумму и нажмите кнопку «Ставка».

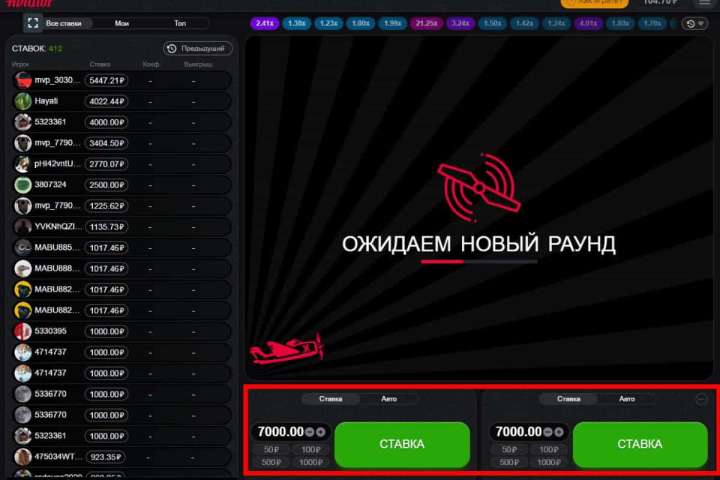

- Добавив дополнительную панель для ставок, вы можете делать две ставки параллельно. Чтобы добавить дополнительную панель ставок, нажмите плюсик в правом верхнем углу панели ставок.

- Чтобы получить свой выигрыш, нажмите кнопку «Вывести». Ваш выигрыш равен сумме вашей ставки, умноженной на ваши шансы на выплату.

Автоигра и Автокешаут

- Автоставка активируется в меню «Авто» панели ставок установкой галочки в строке «Автоставка». После активации ставка будет размещена автоматически, но вы должны нажимать кнопку вывода каждый раунд, чтобы снять деньги. При желании вы также можете воспользоваться функцией автовыплаты.

- Автоматическая выплата доступна в меню «Авто» панели ставок. После активации ваша ставка будет автоматически снята, когда она достигнет установленного вами коэффициента.

Как проверить честность Авиатор?

Вы можете нажать на кнопку с результатом из истории (сыгранные множители вверху игрового окна). В открывшемся окне вы увидите сид сервера, 3 сид игрока, объединенный хэш и результат раунда. Правильность хеша проверяется в любом онлайн-калькуляторе.

Демонстрационный режим Авиатор кз

Те, кто хочет попробовать Aviator, не рискуя реальными деньгами, могут сыграть в демоверсию. Для этого азартного развлечения производитель предусмотрел такой вариант. Вам не нужна учетная запись для доступа к бесплатной игре Aviator на Spribe. Большинство надежных и популярных онлайн-казино в Казахстане, имеющих в своем ассортименте реальные игровые автоматы, предлагают возможность играть в них абсолютно бесплатно без денег и без какого-либо промокода.

Варианты ставок на летчиков

Авиатор — игра, подходящая для всех типов игроков. Независимо от того, считаете ли вы себя лоуроллером, хайроллером или горным игроком, Aviator идеально подходит для вас. Она также поддерживает широкий спектр вариантов ставок, а ваша стратегия поможет вам выиграть.

Игроки в Казахстане с низкими ставками могут делать ставки всего за 7 тенге! Однако игроки с высокими ставками могут делать максимальные ставки и использовать свою стратегию. Между минимальным и максимальным есть несколько гибких вариантов, чтобы удовлетворить потребности игроков среднего уровня. Кроме того, клиент всегда может ввести тариф вручную, если среди предложенного ассортимента нет подходящего варианта.

Как начать играть?

В большинстве своем сайт онлайн-казино использует игровой процесс «Авиатор». Но игра отличается от стандартных игровых автоматов тем, что в ней нет:

- барабанов,

- персонажей,

- социальных платежей через линию выплат.

Действие игры происходит в среде, которую сравнивают с аэропортом, а именно экраном авиадиспетчера.

Игра начинается с самолета, который вот-вот взлетит. Когда самолет взлетает, коэффициенты начинают автоматически увеличиваться. На первых порах разработчик предлагает минимальный показатель — 1х. Чем выше летит самолет, тем больше увеличивается множитель.

Коэффициент может увеличиваться почти до бесконечности. Это говорит о том, что выигрышный потенциал в игре Aviator просто огромен, ведь максимальная награда постоянно увеличивается с каждым новым спином, а их в игре около двух миллионов. Иногда этот показатель может отличаться; все зависит от выбранного игрового портала.

Ставки в Авиаторе

Играя в «Игру Авиатор», игроки первым делом ставят ставки (деньги). Производитель предлагает множество вариантов ставок. игроки могут выбирать из существующих (автоматических) вариантов или вводить их вручную. После того, как ставка сделана, следующим шагом будет ее обналичивание (вывод) до исчезновения самолета.

В этом весь смысл: знание подходящего момента для вывода средств определяет разницу между выигрышем и проигрышем, а также разницу в размере большого или маленького приза. Игроки должны научиться чувствовать момент, когда вывести деньги, когда шансы самые высокие и самолет взлетает.

Когда клиенты онлайн-казино начнут это понимать, игра Aviator станет прибыльным приключением! Таким образом, весь риск этого автомата основана на этой простой дилемме: вывести деньги раньше и упустить большие призы или дождаться потенциально больших денег и рискнуть потерять все.

CashOut — это огромная кнопка прямо под игровой сеткой. Кнопка CashOut была специально разработана таким образом, чтобы игрокам было удобно ее нажимать после того, как они определили свои шансы на выигрыш. Потери могут мучить игрока в тот момент, когда он слишком много терпит и теряет управление самолетом.

Где играть в игру Авиатор? ТОП-7 сайтов

Игра доступна во многих онлайн-казино в Казахстане. Мы рекомендуем использовать для игры Aviator только проверенный и официальный портал или скачать приложение на мобильный телефон.

Авиатор — Как играть и выиграть в игре на деньги?

Если вы будете гнаться за большим, вы потеряете малое. Вот так бы мы описали базовую тактику для прибыльной игры Aviator. Принцип провальной игры и полного слива депозита построен только на вашей жадности и отсутствии выдержки, но мы рекомендуем совсем другой подход к игре, который позволит вывести наибольший выигрыш.

Итак, первый шаг – определиться с размером и типом ставок. Как известно, минимальная ставка — 30 тенге, а максимальное количество одновременных ставок — две. Дело в том, что стратегия игры на одну и две одновременные ставки различна, поэтому мы рассмотрим их отдельно.

Стратегия игры для одной ставки

Самая подходящая игра для новичков – игра в одиночную ставку. В вашем распоряжении будет вся информация, собранная в авиаторе. Во-первых, определите баланс депозита, с которого вы начнете. Именно от этого выбора зависит номинал ставки, которую мы устанавливаем.

В онлайн-казино 1 выигрыша должно хватить на 200 ставок (минимум 100). Альтернатива — если на счету 3500 тенге, то лучше делать ставки на 35 тенге. Если у вас на счету 7 000 тенге, вы можете ставить по 3 тенге каждый раз, когда делаете ставку.

После определения размера ставки на раунд в Авиаторе нам необходимо определиться со стратегией, тактику которой можно легко разделить на три типа:

- Тактика снижения риска при игре на деньги в Авиатор. Эта стратегия не принесет вам крупного приза за короткое время, но позволит вам чувствовать себя комфортно в игре, допустить наименьшее количество проигрышей и не тратить деньги впустую. Суть тактики в игре с низкими коэффициентами, то есть в каждом раунде наш вывод должен иметь множитель х1,20-х1,21 (активировать опции автоматического вывода и автоматических ставок). Это позволит минимизировать количество проигранных игр и планомерно увеличивать баланс. Как только баланс увеличился, вы можете перейти к более высоким ставкам денег в Казахстане. Тем самым увеличивая скорость прироста в Авиаторе.

- Стратегия умеренного риска при игре на деньги в Авиатор. Мы рекомендуем выбирать эту стратегию игрокам, которые не ограничены средствами на счету. Когда мы используем эту тактику в Авиаторе, мы играем с коэффициентами х2-х3. Вероятность результатов с множителем 2-3 составляет 40-42%. Но иногда, когда вы уверены в собственных силах и большого умножения давно не было, можно рискнуть сделать чек с большими множителями, что, надеюсь, не только будет держать вас в неведении, но и приумножит депозит, который можно вывести.

- Рискованная тактика быстрого заработка в игре Aviator. Это стратегия для настоящих везунчиков! Коэффициенты выше 100 падают в среднем раз в полтора часа. Поэтому смотрим, когда последний результат был с умножением х100 и более, пропускаем часик и начинаем активно ставить. Удачи!

Стратегия игры Авиатор kz на деньги для двух одновременных ставок

Эта тактика ничем не отличается от игры в пари, но требует большей концентрации. Лучшая игра — это игра с умеренным риском. Для второй ставки рекомендуем выбрать автоставку и выход на умножение х1,2, а вторую ставку сыграть по стратегии умеренного риска.

Если вы хотите попробовать две ставки, советуем остановиться на умножении х40, а на втором — х100. По этой причине нельзя сразу потратить весь баланс и ждать огромных коэффициентов.

Ни одна из описанных стратегий и тактик не является панацеей. Всегда можно найти что-то уникальное, рискнуть и выиграть. Если вам удалось создать собственный успешный план заработка в игре Aviator, не стесняйтесь поделиться им на нашем сайте в комментариях.

Как играть в Авиатор?

Начать играть совсем не сложно. Большинство онлайн-казино предлагают эту опцию своим клиентам. Все, что требуется от игрока, это:

- Выберите подходящую вам игровую площадку.

- Войдите на официальный сайт выбранного онлайн-казино.

- Выберите категорию Краш-Игры, или чаще всего, Авиатор находится на видном месте в меню.

- Запустите игру.

Важно! Незарегистрированные юзеры могут пользоваться игрой только в демо-режиме. Такая игра не предполагает рисков, но и не дает реального вознаграждения игроку. Для того, чтобы делать реальные деньги, необходимо сначала пройти процедуру регистрации и пополнить игровой баланс.

Промокоды для игры Aviator

Самый щедрый и выгодный промокод для Авиатора кз на сегодняшний день, предлагает официальный сайт компании Spribe.

Внутри-игровой чат Авиатор

В правой части игрового интерфейса (или после нажатия на иконку чата в правом верхнем углу) находится панель чата. В чате Авиатора вы можете общаться с другими игроками. Информация о самых крупных выигрышах автоматически публикуется здесь.

Демо версия игры Авиатор

Демо-версия игры «Авиатор» доступна на сайте для всех зарегистрированных игроков с нулевым балансом счета.

FAQ — Частые вопросы по игре Aviator

Чем Авиатор отличается от других слотов?

Хотя этот игровой процесс классифицируется как слоты для онлайн-казино, он не похож на стандартные игровые автоматы. Здесь нет линий выплат, барабанов или символов.

Где можно поиграть в Авиатора?

Многие игорные сайты предлагают своим клиентам играть в Авиатор бесплатно и на реальные деньги. Для этого достаточно найти казино, в котором есть такой геймплей. Список платформ можно увидеть в наших обзорах.

Можно ли играть в Авиатор бесплатно?

Да. Производитель предоставил демо-режим для этого. Этот инструмент позволяет каждому проверить качество игры и получить начальные знания по геймплею.

Как делать реальные ставки в игре «Авиатор»?

Для того, чтобы использовать игровой процесс для реальных ставок и получать такие же выигрыши, вам необходимо выбрать онлайн-казино с этой игрой, зарегистрироваться, или скачать приложение, внести депозит и играть. После этого перед запуском слота необходимо указать ставку и дождаться начала раунда.

Какая длительность тура в игре Aviator Spribe?

В Aviator продолжительность каждого раунда составляет от 8 до 30 секунд. Если множитель выше, он может быть ниже. По мере увеличения шансов увеличивается и ваш потенциальный выигрыш.

Какая минимальная ставка в игре Авиатор?

Минимальная ставка в каждом раунде Авиатора всего 35 тенге. Это дает вам прекрасную возможность опробовать тактику игры при небольшом бюджете. Как только укрепится уверенность в правильном выборе стратегии, можно переходить к крупным ставкам и соответственно получать большую прибыль. Также есть быстрый выбор ставок номиналом 350, 700, 3500 и 7000 тенге. При ручном добавлении номинала ставки шаг составляет 35 тенге.

Какая максимальная ставка?

Максимальная разовая ставка в Авиаторе составляет 50 000 тенге. Но это не значит, что вы ограничены только одной ставкой. Игра позволяет делать сразу две ставки.

Самый низкий коэффициент в игре?

Наименьший игровой фактор в Авиаторе равен 1. Появляется не очень часто, в среднем раз в 50 раундов. Умножения х1,20 и меньше также считаются убыточными факторами. Частота этих сумм больше единицы, в среднем до 10 раз за 100 игр (10 процентов от общего числа раундов).

Самый высокий коэффициент в Авиаторе?

Максимальный коэффициент в игре Авиатор умножается на 200. Это значение встречается не часто. По нашим наблюдениям, это происходит один раз в период от 60 до 80 минут. То есть за 250 раундов игры коэффициенты падают в среднем более чем на 100. В любом случае мы не рекомендуем полагаться на этот множитель и искать преимущества в более частых умножениях (х2 или х3);

Регистрация в игре Авиатор

Регистрация в Авиатор кз подразумевает регистрацию в онлайн-казино, где представлена эта игра. Вот пример регистрации в игре Авиатор:

- Вам нужно зайти на рабочее зеркало на сегодня.

- Выберите подходящий способ регистрации и заполните необходимые формы и поля.

- Процесс завершен. Осталось пополнить депозит и начать зарабатывать в игре.

Как пополнить счет в Авиатор?

Быстрый вывод денег из игры Авиатор занимает меньше минуты и состоит всего из трех шагов.

- Нажмите кнопку перезагрузки в один клик в правом верхнем углу экрана.

- Выберите метод депозита и введите желаемую сумму.

- Подтвердить оплату.

Бинго! Теперь вы можете начать зарабатывать в игре Aviator by Spribe.